Generative AI is rewriting software development at unprecedented speed. But as AI-generated code floods production systems, a critical question emerges: when something breaks, who takes responsibility? In a world racing to automate everything, Japan’s engineering culture — built on ownership, precision, and an almost stubborn refusal to cut corners — may be exactly what the global tech industry needs.

The Accountability Crisis in AI-Powered Development

The numbers are alarming. A 2025 CodeRabbit study found that AI-generated code is 1.88x more likely to introduce improper password handling, 2.74x more likely to add XSS vulnerabilities, and 1.82x more likely to implement insecure deserialization than human-written code. Veracode’s research showed that 45% of AI-generated code samples failed security tests, with Java hitting a 72% failure rate.

Yet adoption is accelerating. Nearly 60% of U.S. enterprises are experimenting with generative AI, but only 9% feel prepared to handle the risks (PwC 2025 Responsible AI Survey). The Georgetown CSET report warns that tracking the origin of AI-generated code and assigning responsibility for errors remains fundamentally unclear.

This is the accountability gap: everyone is using AI to write code, but nobody is stepping up when that code fails.

The “Robot” Stereotype — Reframed

Japanese engineers have long been labeled “workaholics” or even “robotic” by Western observers. The stereotype carries an implicit criticism: too rigid, too process-driven, too unwilling to move fast and break things.

But strip away the cultural bias and look at what that “robotic” behavior actually represents: an engineer who reads every line of code before it ships. Who tests edge cases that nobody asked them to test. Who stays late not because a manager demanded it, but because they feel personally responsible for what their name is attached to.

This is not workaholism. This is ownership.

The Japanese concept of monozukuri (ものづくり) — literally “making things” — captures this mindset. As documented in Japan’s Basic Law for Promoting Monozukuri Foundation Technology, it’s not just about the finished product. It encompasses design, quality control, testing, and even cleanup. Where Western engineers might aim to “meet requirements,” engineers trained in the monozukuri tradition aim to honor the craft itself.

When AI Breaks Things, Culture Determines Who Fixes Them

Consider a realistic scenario: a production system built partly with AI-generated code experiences a critical security breach. The AI assistant that wrote the vulnerable function doesn’t answer pages at 3 AM. It doesn’t feel urgency. It doesn’t have a reputation to protect.

Someone human has to:

- Interpret the AI-generated code — understanding not just what it does, but what it was supposed to do and where the gap lies

- Assess the blast radius — determining which systems are affected and what data may be compromised

- Take decisive action — rolling back, patching, and communicating, all under pressure

- Prevent recurrence — analyzing root causes and implementing guardrails

This requires engineers who don’t just write code — they own code. Engineers who feel personally accountable for production systems, regardless of whether a human or an AI wrote the original lines.

Research from the Academy of Business Administration Science confirms that Japanese work culture is built on “intense commitment, ethics, and extreme dedication,” with collective responsibility embedded at every level. The concept of sekininkan (責任感) — an internalized sense of duty — means Japanese engineers don’t wait to be told something is their problem. They assume it is.

The Data: AI Code Quality Is Getting Worse, Not Better

The assumption that AI-generated code will improve over time is not supported by current evidence:

| Metric | AI-Generated Code | Human-Written Code | Source |

|---|---|---|---|

| XSS vulnerabilities | 2.74x more likely | Baseline | CodeRabbit 2025 |

| Excessive I/O operations | ~8x more common | Baseline | CodeRabbit 2025 |

| Security test failure rate | 45% | — | Veracode 2025 |

| Design flaws present | 62% of solutions | — | CSA 2025 |

| Insecure deserialization | 1.82x more likely | Baseline | CodeRabbit 2025 |

As Stanford researchers found, security actually degrades with iterative AI code generation — each round of AI refinement introduces new vulnerability patterns. The Cloud Security Alliance warns that AI-generated code tends to be “simpler and more repetitive, yet more prone to unused constructs and hardcoded debugging.”

In other words: AI writes code that looks clean but hides structural weaknesses. Catching these requires engineers who read deeply, question assumptions, and refuse to rubber-stamp AI output.

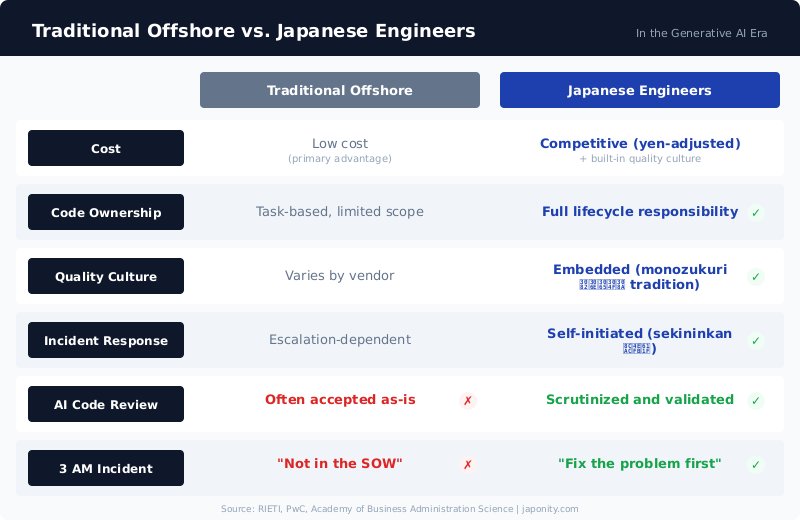

The Weak Yen Makes Japanese Engineers the World’s Best Value

Here’s where economics meets culture. The Japanese yen has depreciated significantly against major currencies, and as RIETI research documents, this shift is structural, not temporary.

The result: Japanese engineers now offer exceptional cost-to-quality ratios. Companies that previously outsourced to Southeast Asia for cost savings can now access Japanese engineering talent — with its built-in quality culture — at increasingly competitive rates.

But this isn’t about finding “cheap labor.” It’s about finding responsible labor.

What Outsourced Teams Won’t Do at 3 AM

Traditional outsourcing operates on a transactional model: deliver the spec, bill the hours, move to the next ticket. When an AI-generated function causes a production incident at 3 AM, the typical outsourced contractor’s response is predictable — it’s not in the SOW, it’s not their shift, it’s not their problem.

Japanese engineers operate differently. The cultural framework of atarimae hinshitsu (当たり前品質) — the idea that “things should work as they’re supposed to” — creates an internal standard that goes beyond contractual obligations. When something breaks, the instinct is not to check the contract. It’s to fix the problem.

In the generative AI era, this distinction becomes critical. AI doesn’t just introduce new bugs — it introduces unfamiliar bugs. Patterns that no human would write. Edge cases that no test suite anticipates. The engineers who will navigate this landscape successfully aren’t the ones who accept AI output uncritically. They’re the ones who treat every AI-generated line with the same scrutiny they’d apply to a junior developer’s first pull request.

The Opportunity for International Companies

As AI reshapes software development, international companies face a choice: race to the bottom with fully automated, accountability-free development pipelines, or invest in engineering teams that combine AI productivity with human responsibility.

Japan offers a third path. Not anti-AI, but AI-accountable. Japanese engineering organizations are adopting generative AI tools while maintaining the cultural infrastructure — code reviews, quality gates, ownership mentality — that prevents AI-generated mistakes from reaching production.

For companies looking to build this capability, Japonity connects international businesses with Japanese engineering organizations that embody this approach. Not outsourcing in the traditional sense — but partnership with teams that treat your product as their own.

Because in the age of AI, the most valuable engineer isn’t the one who writes code the fastest. It’s the one who takes responsibility when that code fails.

Interested in Japanese business opportunities?

Whether you're looking for technology partners, engineering talent, or market insights — we can help connect you with the right Japanese organizations.

Get in Touch →